Build

We've solved it before. We'll solve it again.

“We scaled from 10K to 100K users without any performance issues.”

We leverage Cloud and AI technologies to transform business strategies into seamless digital systems, end-to-end with No Drama - No Surprises.

We bring the right skills and processes that your business demandsNo Guesswork, No Compromises.

We've solved it before. We'll solve it again.

“We scaled from 10K to 100K users without any performance issues.”

We don't start from zero — Your industry's playbook is already in our hands.

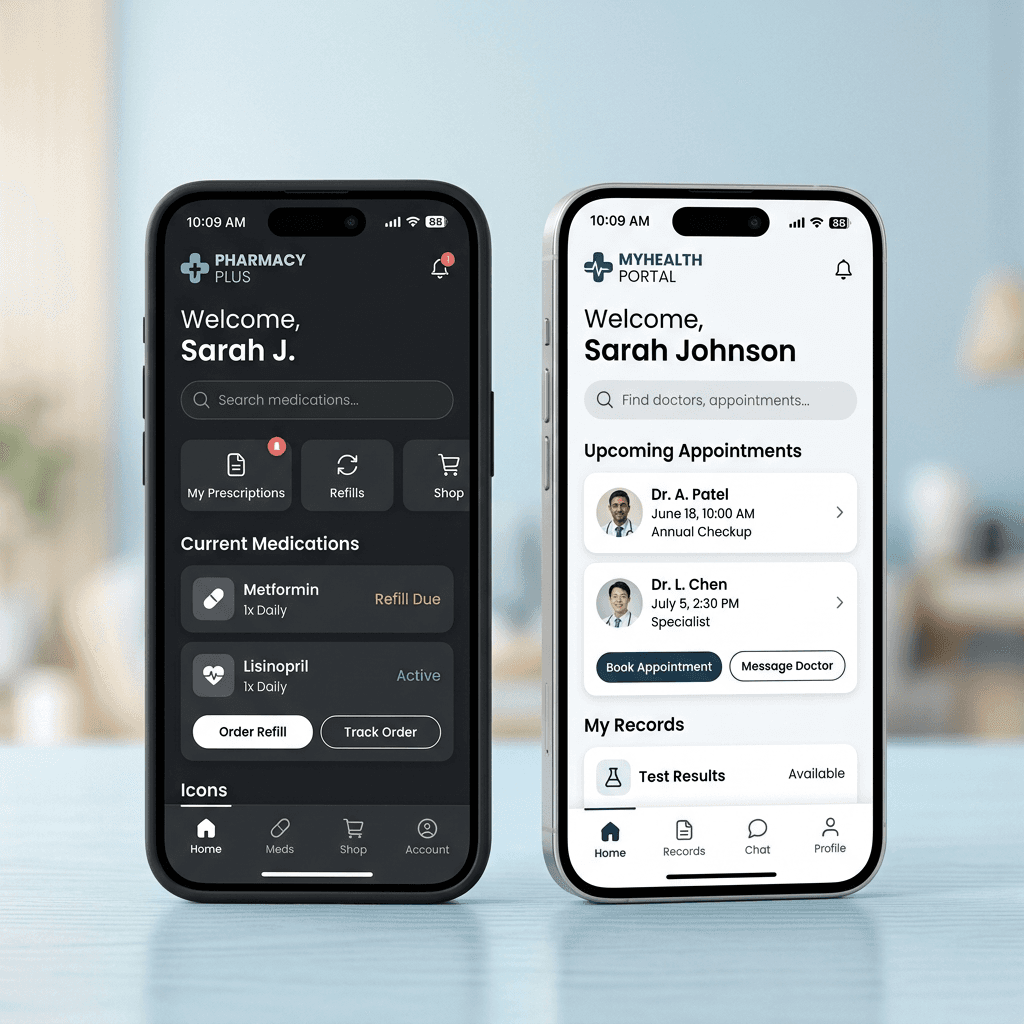

We don't just bring Healthcare expertise to a Healthcare problem.

We bring speed from logistics, real-time monitoring from IoT, and simplicity from education. Your solution doesn’t just get healthcare best practices—it gets the best of everything. Yours gets all of it. Every industry sharpens the next. Yours gets all of it.

Ysquare demonstrates a strategic problem solving mindset and takes a holistic view to find innovative and efficient ways to facilitate product delivery.

At Ysquare, we establish working models offering genuine value and flexibility for your business.

Build your product iteratively through our value driven custom development approach.

Augment your team with the right skills & expertise tailored for your product roadmap.

Retain your product expertise through seamless product & team transition.

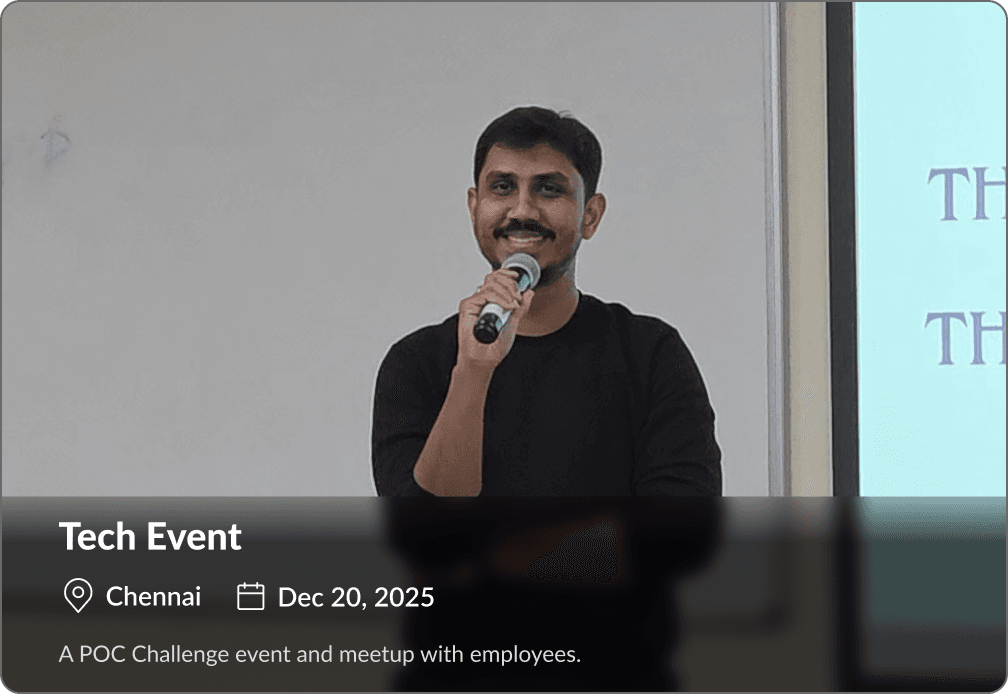

Discover how Ysquare has helped businesses accelerate growth, build innovative solutions, and achieve remarkable results.

“Ysquare has a young and energetic team of professionals very passionate about creating positive impact through their work. We had a very transparent and agile team that enabled us to achieve our aggressive goals.”

“Ysquare team is quality-oriented and professional to work in terms of accommodating our requests for mutual wins. Highly recommend Ysquare Team for technology outsourcing partnerships!”

“Ysquare has been a valuable accelerator for our tech team expansion. We quickly found the right skills, by staying focused on our core roadmap. Their team is quality-oriented to work in terms of accommodating our requests for mutual wins. Highly recommend Ysquare Team for technology outsourcing partnerships!”

“Wanted to a take a moment to appreciate all the effort done by Ysquare team in making our idea come alive as an application. I know its been a roller coaster ride, but I really appreciate the support provided by the team.”

“Their team is quality-oriented and professional to work in terms of accommodating our requests for mutual wins. They have been a valuable accelerator for our team expansion. Found the right skills, by staying focused on our core roadmap.”

Most clients start with a 30 minute discovery call. We'll tell you honestly which engagement makes sense and which one doesn't.

What you get from us

Find the Right Solution

Tell us about your needs and we'll point you in the right direction.

Read our latest blogs on web development, AI solutions, cloud technologies, and digital innovation shaping the future.

Find answers to the most commonly asked questions about our services, process, pricing, and support.